Nvidia has quietly constructed a massive networking division that rivals its core chip business, driven by the explosive growth of artificial intelligence. While the company is best known for its GPUs, its data center networking segment has become a critical, and rapidly expanding, revenue source, exceeding $31 billion in annual revenue.

The Rise of AI Networking

Nvidia’s foray into networking began in 2020 with the $7 billion acquisition of Mellanox, a move that now appears prescient. The company’s networking technologies – including NVLink, InfiniBand, Spectrum-X, and co-packaged optics – are essential for creating “AI factories,” specialized data centers optimized for training AI models. Last quarter alone, the division generated $11 billion in revenue, a 267% increase year-over-year.

This growth is outpacing established networking giants: according to analysts at Zacks Investment Research, Nvidia’s networking revenue in a single quarter now exceeds Cisco’s entire networking business for the year. Despite this success, the segment receives less public attention than Nvidia’s chip or gaming divisions.

Why This Matters

Nvidia’s expansion into networking isn’t merely about diversification; it’s about controlling the entire AI infrastructure stack. By integrating GPUs with the high-speed networking required for AI training, Nvidia can offer a complete, optimized solution to its customers. This vertical integration is a key differentiator, as Nvidia sells the full stack through partners rather than individual components.

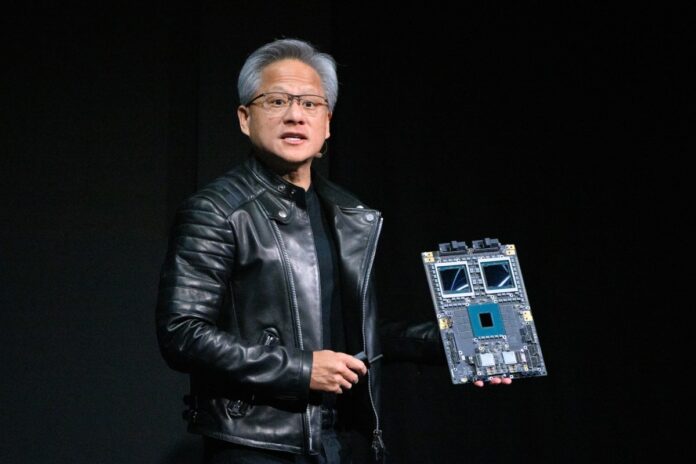

As Nvidia’s senior vice president of networking, Kevin Deierling, explains, the company’s CEO, Jensen Huang, recognized early on that the data center is now the fundamental unit of computing, making networking as essential as the chips themselves.

Full-Stack Dominance

Nvidia doesn’t just sell hardware; it sells a fully integrated system. This approach allows Nvidia to capture more value from the AI boom and lock customers into its ecosystem. The company recently unveiled the Rubin platform, including six new chips designed for “AI supercomputers,” along with advancements in Inference Context Memory Storage and Spectrum-X Ethernet Photonics switches.

“It’s no longer a peripheral to connect the printer… It’s fundamental to the computer. In the old days, we had what was called the back lining inside the computer. Today, the network is the back lining of the AI factory, and it’s super important.”

— Kevin Deierling, Nvidia’s Senior Vice President of Networking

Nvidia’s silent expansion into networking positions it as a dominant force in the AI era, not just as a chip supplier but as a provider of the entire infrastructure needed to power the next generation of computing. This strategic move ensures Nvidia’s long-term relevance and profitability in a rapidly evolving market.